• Generative AI can produce fast, fluent answers that feel like understanding but often mask gaps in knowledge.

• Fluency isn’t insight, and plausible outputs aren’t the same as critical thinking.

• To avoid the “illusion of understanding,” use AI thoughtfully.

• Tools like Cluing AI Chat help you reflect, connect ideas, and make sense of what you know.

Seeing Past the Illusion of Understanding: How AI Can Help

We live in a world where answers arrive faster than reflection. Generative AI can summarize, brainstorm, and outline complex topics in seconds.

It’s powerful and tempting - like having a smart assistant at your side.

But here’s the catch: fast doesn’t mean deep, and fluent doesn’t mean you understand.

Researchers call this phenomenon epistemia - the illusion of understanding that happens when AI’s polished answers make us feel smarter than we really are.

AI can trick us into overestimating our knowledge. The more convincing it sounds, the more likely we are to skip real thinking.

Epistemic comes from the Greek epistḗmē (ἐπιστήμη), meaning 'knowledge' or 'understanding'. It's used primarily in philosophy (including the field, Epistemology, that bears its name) as an adjective meaning "related to the abstract concept of knowledge or knowability".

The Information Management Problem - Even Before AI

Even before generative AI, we all faced a fundamental challenge: a flood of material online that encourages repetition over reflection.

The common approach became: find the information, use it, and move on.

Unique thinking, critical reflection, and original insight often got left behind.

And AI amplifies this tendency even more. Any question can get a quick, plausible answer. The temptation is to accept it at face value rather than interrogate it. What’s lost is discovery.

Actual understanding happens when answers aren’t obvious, questions are messy, and insight comes from wrestling with complexity.

Four Ways AI Can Shape Research and Risk Overconfidence

Messeri and Crockett describe four ways scientists rely on AI:

- AI as Oracle - to search, summarize, and evaluate literature.

- AI as Surrogate - to generate data when collection is difficult.

- AI as Quant - to analyze vast datasets beyond human reach.

- AI as Arbiter - to adjudicate studies objectively, removing human bias.

These roles illustrate AI’s power: efficiency, productivity, and the promise of objectivity.

But they also highlight the danger: when we rely too heavily on AI, we risk narrowing our perspective to what the tool can do, rather than what is meaningful or nuanced in the real world.

Without human judgment, we might feel we “understand” a problem fully, when in reality we’ve merely seen a polished approximation.

Why Human Judgment Remains Irreplaceable

Humans generate meaning. AI generates plausible patterns. Humans use context, intuition, and purpose; AI optimizes coherence. Fluency is not understanding. Certainty is not truth.

In fields where tacit knowledge matters, like leadership, ethics, healthcare, and research, accepting AI outputs blindly risks replacing judgment with a polished illusion.

Students and researchers may believe they understand a topic, yet have not wrestled with its nuances, limitations, or underlying assumptions. Epistemia seduces us into thinking we know more than we do.

Fluency can be seductive. Plausibility can be convincing. Insight requires curiosity, reflection, and critical engagement.

Amplify Your Insight, Don’t Replace It

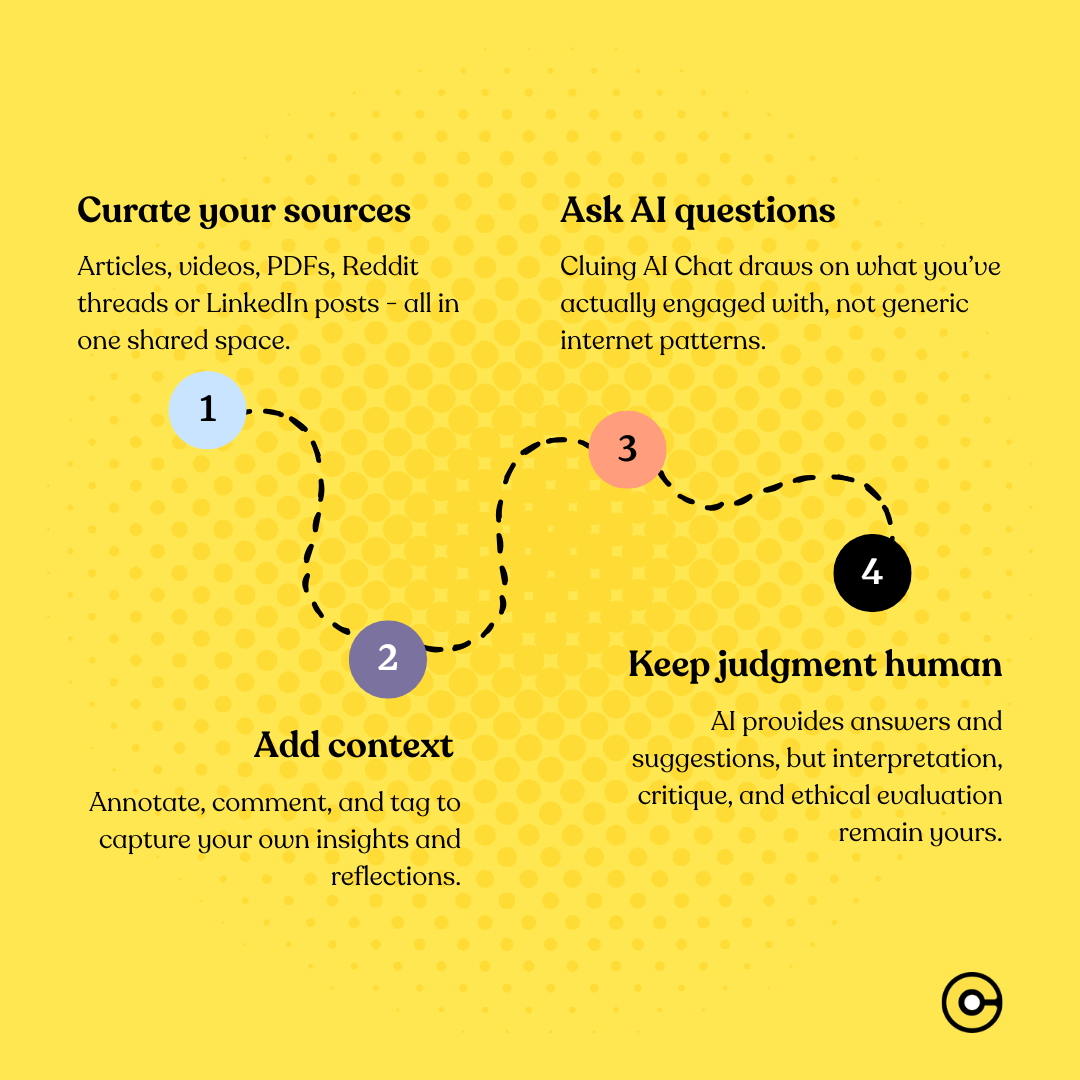

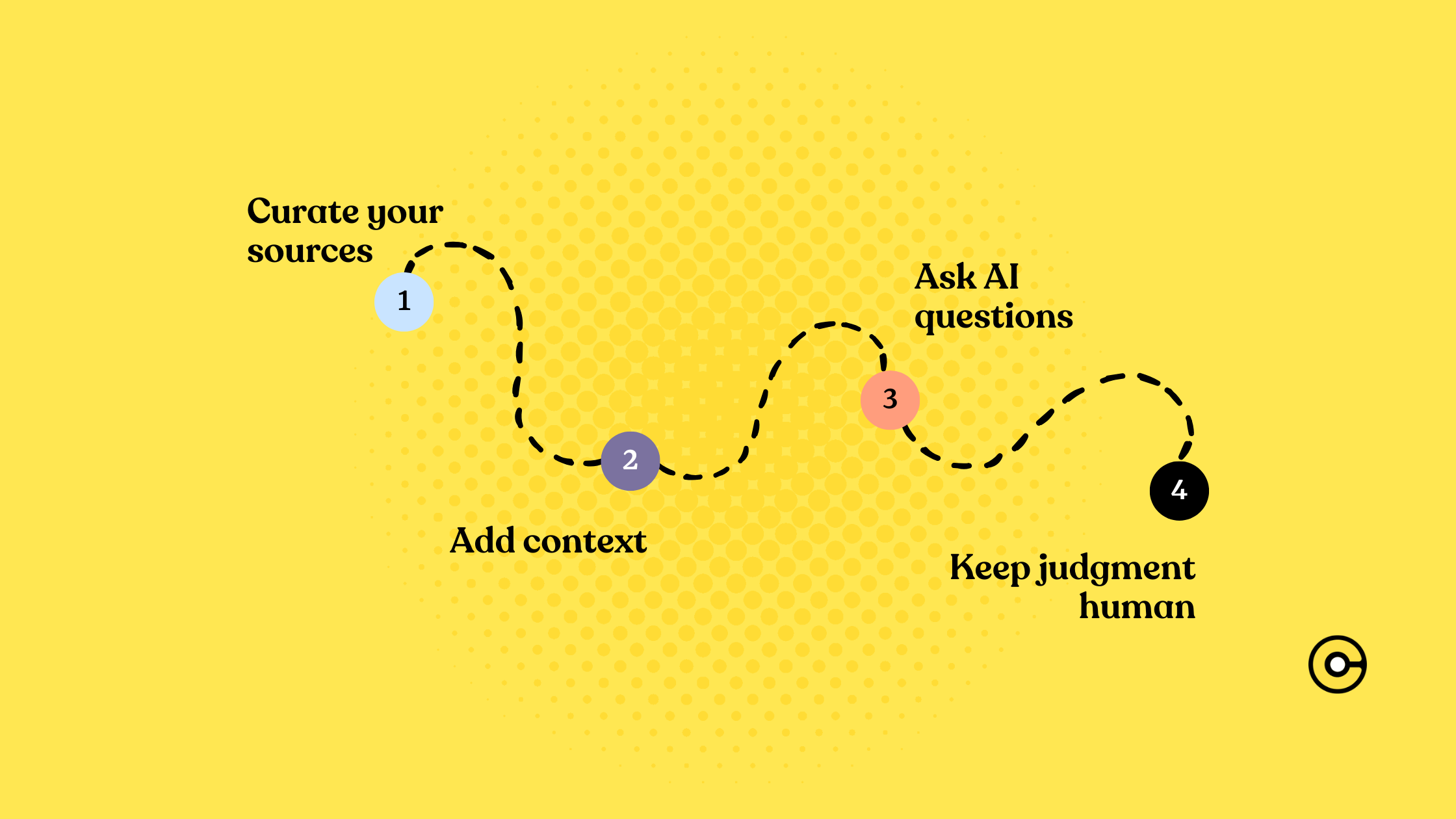

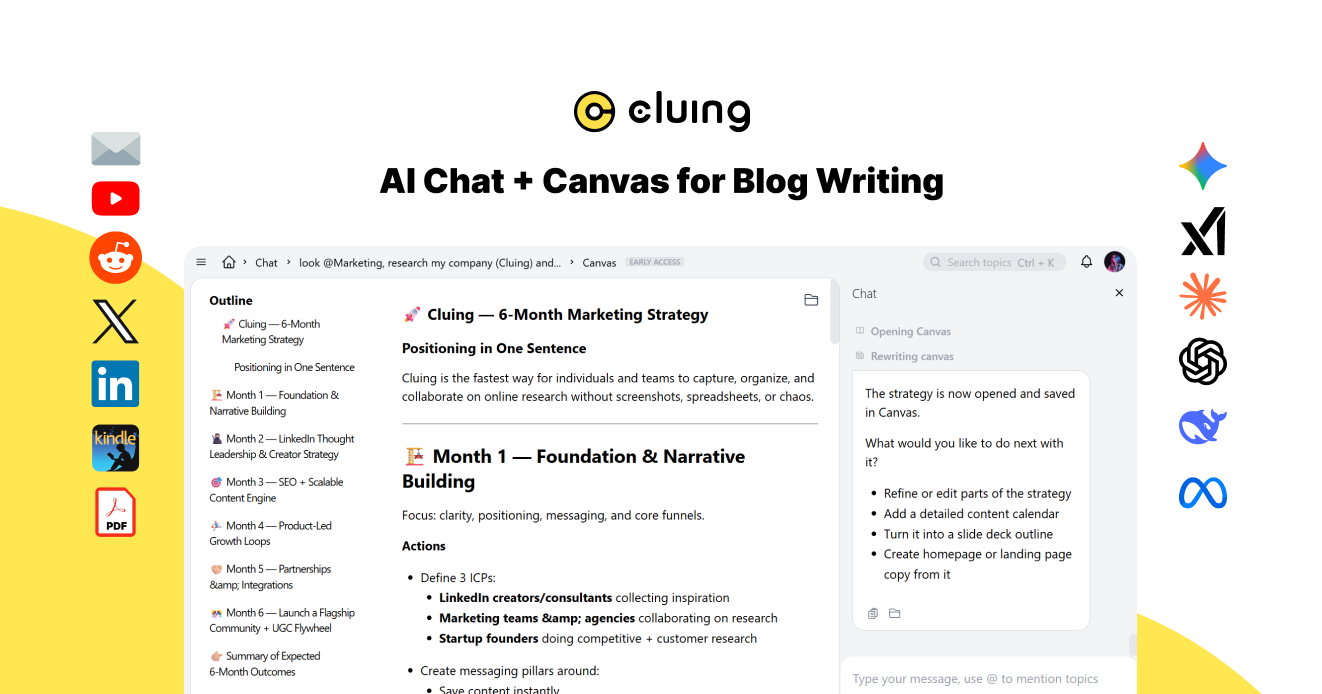

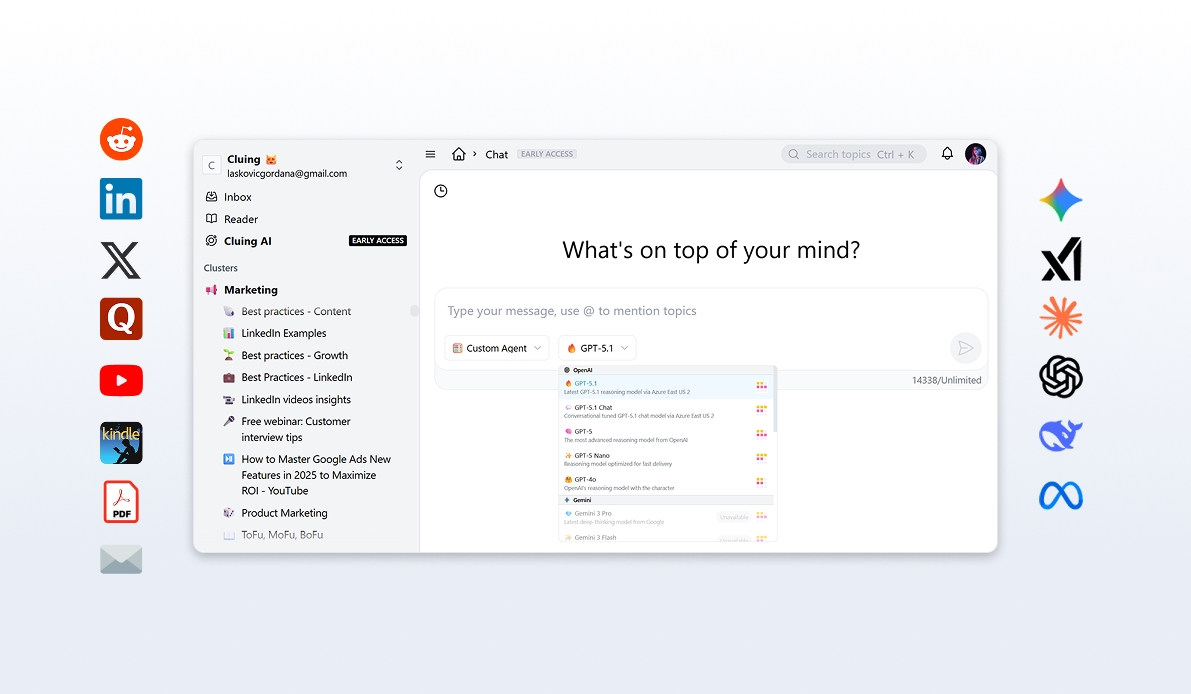

This is where Cluing AI Chat offers a different approach.

It doesn’t promise instant understanding or replace judgment. Instead, it helps users see past the illusion by making the knowledge workflow transparent and reflective:

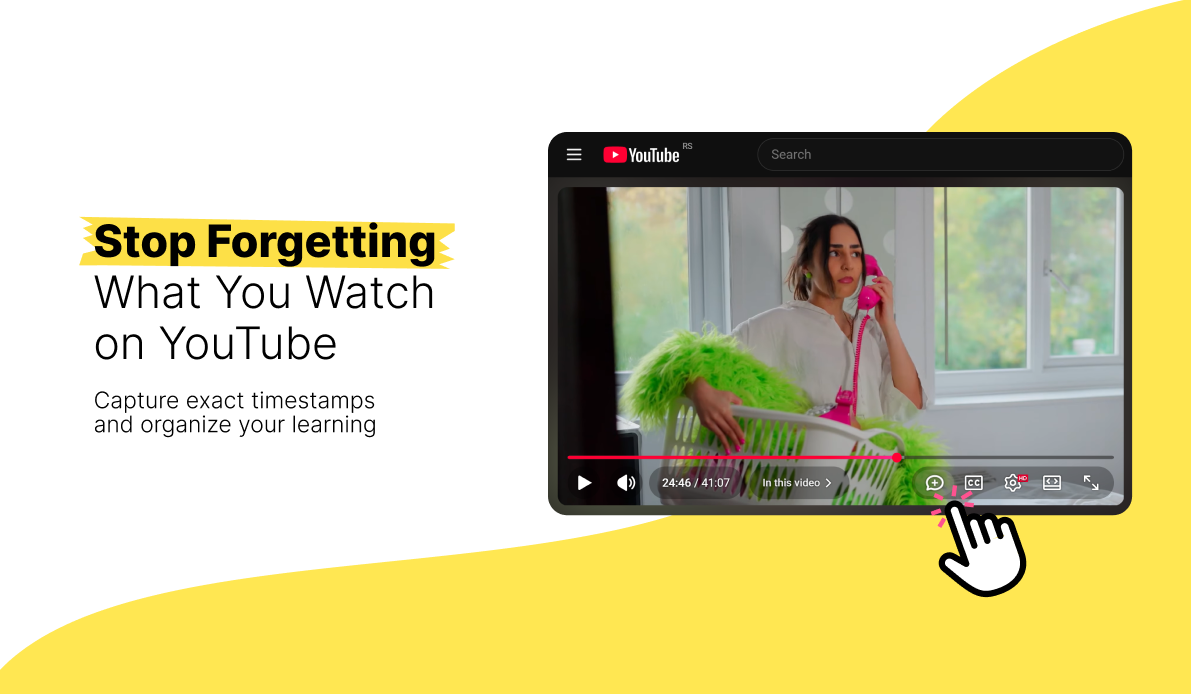

- Curate your sources: Articles, videos, PDFs, Reddit threads or LinkedIn posts - all in one shared space.

- Add context and commentary: Annotate, comment, and tag to capture your own insights and reflections.

- Ask AI questions informed by your own knowledge: Cluing AI Chat draws on what you’ve actually engaged with, not generic internet patterns.

- Keep judgment human: AI provides answers and suggestions, but interpretation, critique, and ethical evaluation remain yours.

Instead of producing answers for you, Cluing helps you interrogate, connect, and make sense of the knowledge you already have. It amplifies your ability to reflect rather than shortcut it.

A Call for Reflection

Generative AI is seductive. Plausible outputs and confident fluency create an illusion of understanding that can fool even the most sophisticated researchers.

Insight requires effort, context, and critical engagement.

The challenge and the opportunity is to use AI to expand thinking without losing judgment.

Tools like Cluing AI Chat aren’t oracles - they’re partners that help you navigate, question, and synthesize knowledge without fooling you into thinking you already “get it”.

FAQ

What is the "illusion of understanding" in AI?

It's when AI's fluent, confident outputs make you feel you understand something, even if you haven't reflected or questioned it.

Why can AI outputs be misleading?

They are plausible and polished but may hide gaps, context, or contradictions in the information.

How can I avoid falling for this illusion?

Reflect, question, compare sources, and apply your own judgment instead of accepting outputs at face value.

What is Cluing AI Chat, and how does it help?

It’s a tool that organizes your sources, lets you add context, and answers questions based on what you’ve engaged with, keeping judgment human.

Where does real understanding come from?

From grappling with messy questions, comparing information, reflecting, and connecting ideas.

![The Best Tool for Collaborative Research in Content Marketing Teams [2026]](/content/images/2025/12/image--5-.png)

![5 Tools Marketers Use to Organize Research - Compared [2026]](/content/images/2026/01/Frame-1975.png)