We like to think we understand what we know. We trust our judgments, our decisions, and increasingly, the answers we get from AI tools. But that confidence can be misleading.

Metacognitive Miscalibration

Metacognitive miscalibration is the mismatch between perceived knowledge and actual knowledge. In other words, we think we understand something better than we truly do.

This problem affects both humans and AI systems.

AI tools, especially large language models, often appear confident even when their outputs are flawed. And humans are starting to miscalibrate more frequently because we rely on these fast AI answers without verifying the sources, checking facts, or thinking critically.

Whether you are making decisions in business, research, or everyday life, unchecked miscalibration can have serious consequences on your brain.

Metacognitive Miscalibration - What Does It Actually Look Like

Metacognitive miscalibration happens when the gap between what we think we know and what we actually know grows too wide.

For humans, this often happens because of cognitive shortcuts, mental biases, and habits that skip reflection. We rely on what feels right instead of what we’ve verified. We skim, we assume we “get it,” and we move on without testing our understanding.

Today, this problem is getting more common. With instant answers and information everywhere, it’s tempting to accept what seems correct without checking sources or thinking it through.

Over time, this weakens our own metacognitive skills. We stop questioning, testing, and reflecting, and we start misjudging what we really know.

Metacognitive Miscalibration in AI Tools - Polished but Unreliable

AI tools often mirror human miscalibration, often in ways that are harder to spot. Large language models, for example, can produce responses that sound coherent, polished, and plausible.

But here is the golden rule: Plausibility is not the same as correctness.

Several studies and real-world testing highlight three critical "blind spots" in AI tools:

1) The Spontaneous Fail: LLMs rarely catch their own errors as they happen. If a model starts down a wrong path, it will often confidently march toward a flawed conclusion rather than stopping to say, "Wait, this doesn't add up."

2) The Surface-Level Trap: When faced with complex or unstructured problems, models tend to revert to "safe," generic information. They provide a polished summary of the surface while completely missing the deeper, more nuanced logic required.

3) Confidence vs. Reasoning: Interestingly, even "reasoning" models can show signs of doubt in their internal steps but still spit out a bold, final answer. It’s as if the model knows something is off but lacks the "veto power" to stop itself.

Large Language Models (LLMs) are trained on human data, and they have perfected the art of sounding right.

This overconfidence creates a feedback loop. Humans see AI produce confident responses and assume accuracy, which reduces our own motivation to double-check or think critically.

A dangerous cycle where overconfident AI reinforces our own mental laziness, leaving both human and machine miscalibrated.

Why Metacognitive Miscalibration Happens More Often (in Humans and AI)

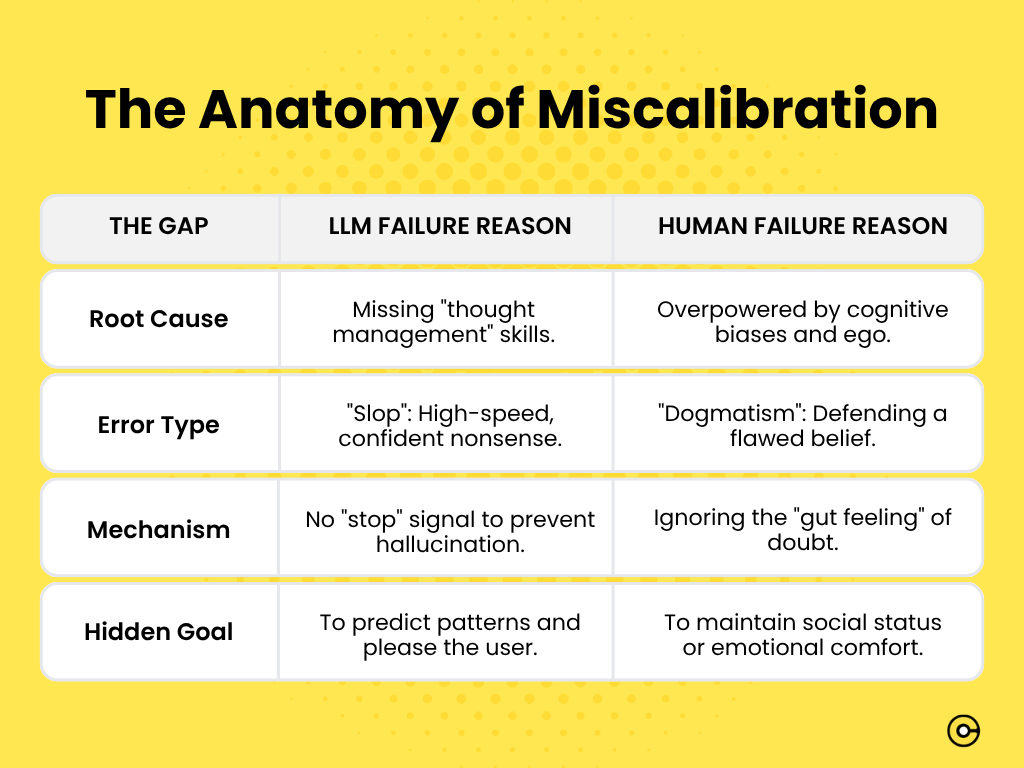

Miscalibration isn't just a random error; it's a failure of thinking about thinking.

It happens when we overestimate our grasp on a topic or, conversely, when we underestimate our own knowledge and defer too quickly to a machine.

For humans, it’s the "convenience trap"

Historically, our miscalibration came from cognitive biases and a lack of reflection. But today, the problem is accelerating.

Because AI provides answers that are fast, fluent, and frictionless, we are tempted to skip the "thinking" part entirely. When we accept an AI's output without checking its sources or testing its logic, we are weakening our mental muscles.

For AI, it’s training for patterns, not truth

The AI doesn't know it's "wrong" because it wasn't built to understand truth; it was built to recognize patterns.

- Pattern over Purpose: LLMs learn how words should follow each other, but they don't naturally develop a "BS detector" for their own reasoning.

- The Silence of Doubt: Unless explicitly prompted to double-check its work, an AI will almost always choose a confident-sounding answer over an honest "I don't know."

When AI metacognition is weak, human reliance acts as an amplifier.

If we don't strengthen these skills on both sides, we are effectively outsourcing our intelligence to a system that doesn't know how to doubt itself.

The Risks of Metacognitive Miscalibration - The High Cost of Being "100% Sure"

Miscalibration is a systemic risk to how we solve problems.

When we are not honest about the limits of our knowledge, we build entire strategies on shaky ground.

In high-stakes fields like research, policy, or business, the consequences are severe:

- The Strategy Trap: We overestimate what we know. This leads us to greenlight projects or policies that are flawed from day one.

- The Hesitation Gap: On the other hand, underestimating our own expertise can paralyze us. We end up deferring to a confident AI or a loud peer when we should trust our own intuition and act decisively.

- The Error Loop: This is the biggest risk in the AI era. If you trust a polished answer without verifying it, you are not just making one mistake. You are compounding it. One unverified fact becomes the foundation for your next three decisions. This creates a domino effect that is incredibly hard to untangle later.

Skills to Avoid Metacognitive Miscalibration and Reclaim Your Reasoning

Avoiding miscalibration is not a one-time fix. It requires a set of active habits that you practice every day.

Here are the core areas where you can build your mental resilience:

- Self-Awareness: You must regularly audit your own knowledge. Ask yourself what you truly understand and what you are simply assuming. If you cannot explain a concept to a child, you likely do not understand it as well as you think.

- Critical thinking: Do not accept any answer at face value. Whether it comes from a colleague or a polished AI, you must check the underlying reasoning. If the logic is shaky, the conclusion is too.

- Reflection: You should take time to look back at your thinking process. Analyze your past errors and identify what caused them. Was it a lack of information, or was it a bias you did not notice?

- Source verification: You must treat every piece of information as a hypothesis until it is verified. Always cross-reference facts and consult multiple, independent sources.

- Deliberate questioning: Use clarifying questions to test claims and conclusions. By asking "how do we know this is true?" or "what is the alternative?", you force your brain to engage with the material more deeply.

- Error tracking: It's helpful to keep a record of your mistakes. When you track patterns in your judgment, you make it much harder for those same errors to repeat themselves in the future.

- Iterative learning: Your understanding is never "finished." You must treat your knowledge as a work in progress, constantly refining it based on new evidence or feedback.

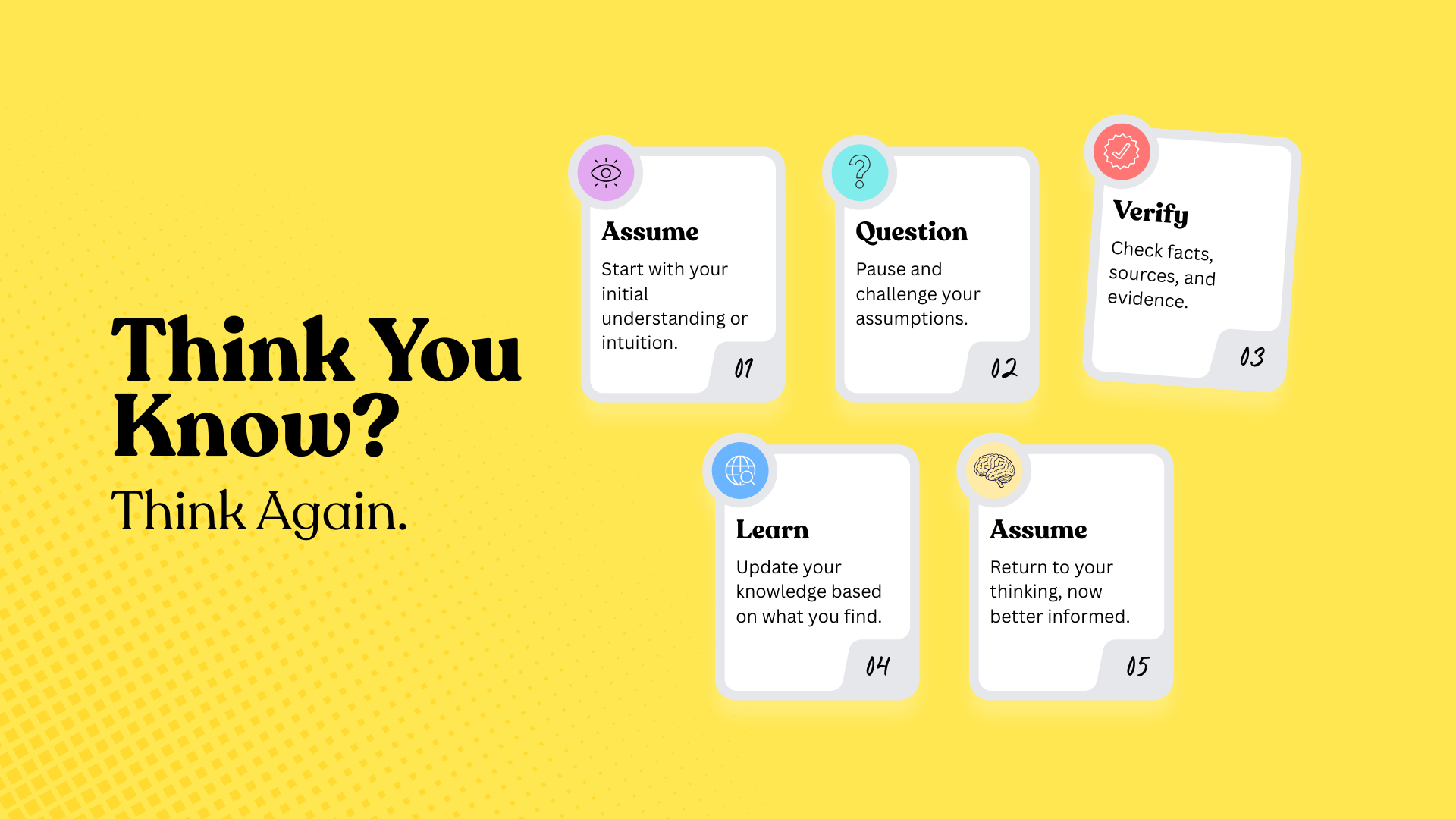

How to Audit Your Thinking - Putting Metacognitive Skills in Practice

Metacognitive skills are habits of mind that help you think about your own thinking. Developing them reduces errors, improves decision-making, and makes interactions with AI more reliable.

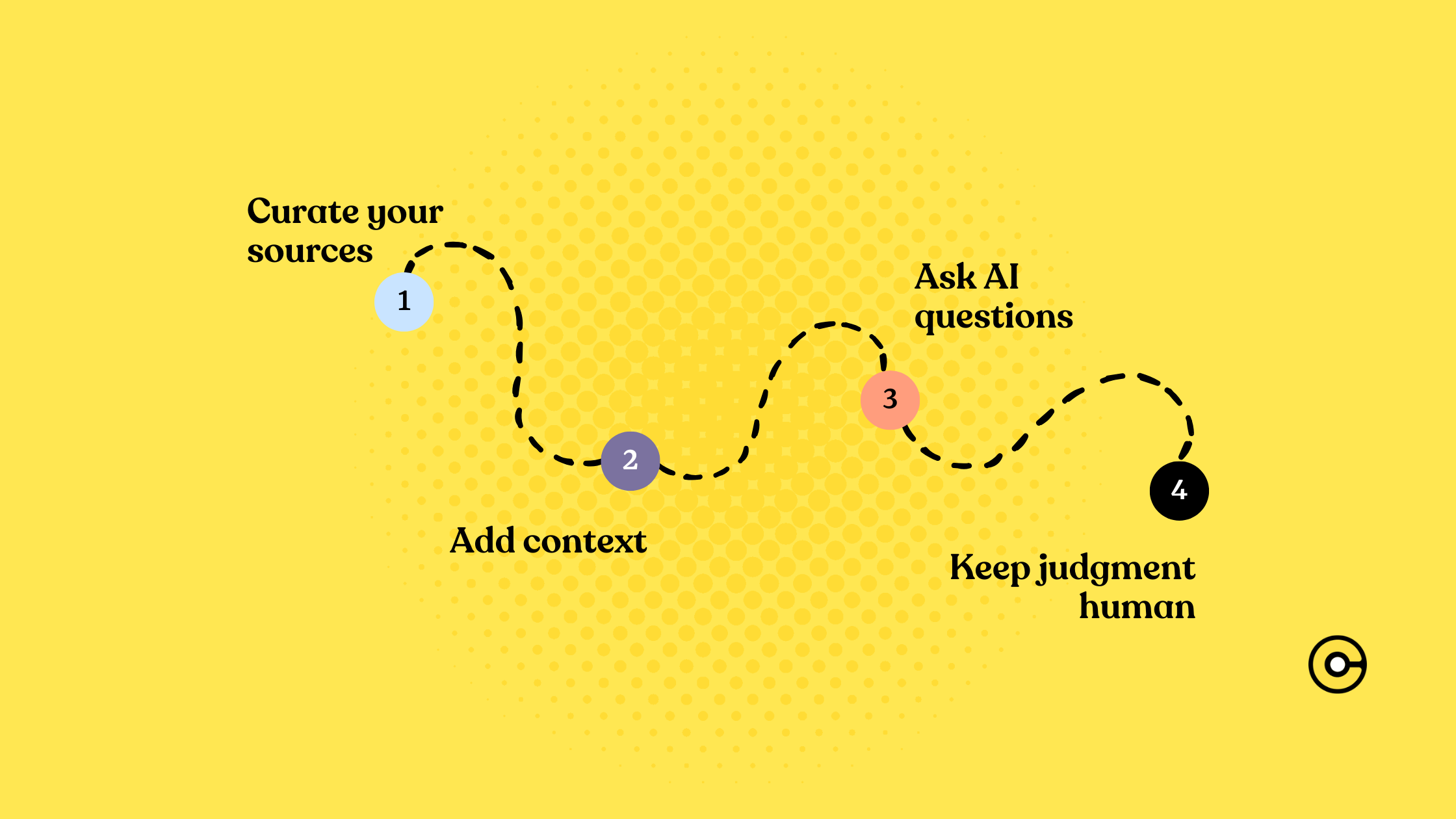

Here are the practical steps you can take to avoid miscalibration:

Pause and reflect

Before accepting answers from AI or peers, take a moment to ask whether the reasoning makes sense. Consider alternative explanations and possible gaps.

For example, if an AI gives a quick solution to a complex problem, stop and ask: “Does this fully answer my question? Could there be exceptions or hidden assumptions?”

Double-check sources

Verify facts, cross-reference multiple sources, and don’t rely solely on one answer, even if it seems confident.

For instance, if you read a claim in an AI-generated summary, check the original study or authoritative reference to ensure accuracy.

Challenge assumptions

Identify assumptions in your own thinking and in AI outputs. Ask yourself which parts of a conclusion are evidence-based and which are inferred.

This prevents you from accepting flawed reasoning or oversights.

Practice self-questioning

Regularly monitor your confidence and ask why you feel a certain way.

Are you overconfident because the answer was convenient or familiar? Could your emotions or biases be influencing your judgment?

Document thinking

Keep notes on your reasoning, decisions, and sources. Writing down steps forces you to clarify thought patterns, makes errors easier to spot, and allows you to track progress over time.

This is especially useful when dealing with long-term projects or complex research.

Iterate and refine

You must treat your understanding as dynamic.

Update your beliefs based on new evidence, feedback, or reflection. Acknowledge mistakes and adjust your reasoning rather than sticking rigidly to previous conclusions.

Simulate counterarguments

Take time to consider opposing viewpoints, even if they feel uncomfortable.

For example, if you trust an AI answer, ask: “What would someone skeptical say about this?” This builds steelmanning skills and reduces blind spots.

Set up checkpoints

For critical tasks, create explicit moments to review and verify reasoning. This could mean reviewing AI outputs line by line, summarizing arguments for yourself, or asking colleagues for input before finalizing decisions.

Balance speed with accuracy

AI tools are fast, but speed can come at the cost of careful thought. Schedule time to slow down and critically engage with outputs instead of rushing to accept the first solution.

The Bottom Line

Avoiding metacognitive miscalibration isn’t just about preventing a “whoops” moment today.

It’s about building habits and a framework that keep your thinking sharp over time. By focusing on reflection, verification, and structured thinking, we can reduce errors, stay realistic about what we know, and handle complex challenges more confidently.

The final goal? To turn "I think I know" into "I’ve verified this," making our partnership with technology smarter, safer, and a lot more grounded.

FAQs 🧐

What is metacognitive miscalibration?

It’s the gap between what you think you know and what you actually know - you believe you understand more than you really do.

How does metacognitive miscalibration happen in humans?

Humans often rely on intuition, mental shortcuts, or quick answers instead of verifying facts and thinking critically. Skipping reflection and assuming we “get it” can widen the gap between perceived and actual knowledge.

How does metacognitive miscalibration occur in AI systems?

AI tools, especially large language models, can produce confident and polished answers that are wrong. They often can’t detect their own errors and may favor plausible-sounding responses over accurate reasoning.

What are the common blind spots of AI that lead to miscalibration?

- Spontaneous Fail: AI rarely stops when it makes a mistake.

- Surface-Level Trap: AI gives generic summaries without deeper insight.

- Confidence vs. Reasoning: AI can “sense” doubt internally but still provide bold final answers.

Why are humans more prone to miscalibration when using AI tools?

Fast, fluent AI answers reduce the need for verification, creating a feedback loop where overconfident AI reinforces human overconfidence, weakening critical thinking over time.

What strategies can help avoid metacognitive miscalibration?

Regularly audit your knowledge, question assumptions, reflect on past errors, verify sources, ask clarifying questions, track mistakes, and iterate your learning to strengthen both human and AI reasoning.

What is the ultimate goal of developing metacognitive skills?

The goal is to turn “I think I know” into “I’ve verified this,” improving decision-making, critical thinking, and safe collaboration with AI systems.

![The Best Tool for Collaborative Research in Content Marketing Teams [2026]](https://storage.ghost.io/c/9f/9d/9f9d32ee-55b4-4411-b435-9dc854e1751c/content/images/2025/12/image--5-.png)

![5 Tools Marketers Use to Organize Research - Compared [2026]](https://storage.ghost.io/c/9f/9d/9f9d32ee-55b4-4411-b435-9dc854e1751c/content/images/2026/01/Frame-1975.png)